We take technology for granted. That's not wrong, but it fascinates me to no end that the technology we take for granted today was once heralded the pinnacle of human ingenuity. Electricity. The printing press. The motor car. The airplane. The internet. The mobile phone. No magic is spared the wrath of human boredom.

After a recent rabbit hole dive into human sound perception, I wondered how people reacted when the sound recording + playback was first invented.

How it all began

Way back in the 1700s, if you wanted to hear sound - the only way to do that was to go directly to the source. For music - find someone who played an instrument, or to sing a song for you. For hearing someone speak - go visit them in person. And if you couldn't do that? Too bad.

Then over the 1800s, a bunch of French and American blokes had a grand idea - "Wouldn't it be nice if you could decouple the creation of the sound and the hearing of the sound". This eventually led to Thomas Edison creating the phonograph - a device that could capture the sound waves and then play it back later.

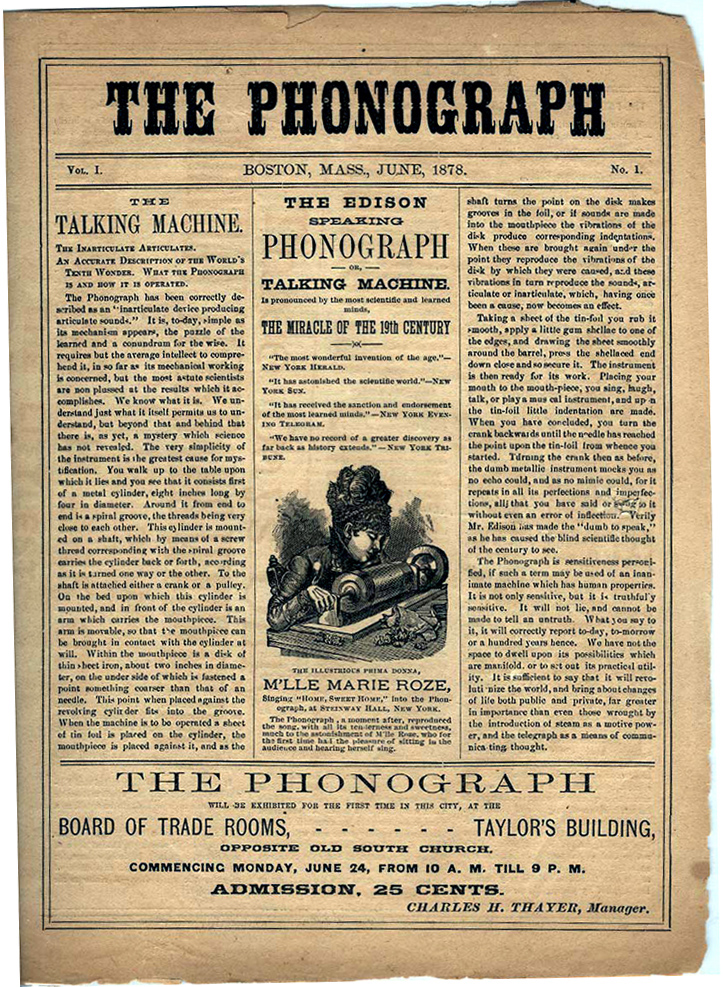

Newspaper announcement of the Edison Phonograph exhibition - Edison Tinfoil

Newspaper announcement of the Edison Phonograph exhibition - Edison Tinfoil

Have a look at some of those quotes! "We have no record of a greater discovery as far back as history extends" - The New York Tribune

And like any new exciting technology, you inevitably have its detractors too. From the Smithsonian magazine:

Some social critics argued that recorded music was narcissistic and would erode our brains. “Mental muscles become flabby through a constant flow of recorded popular music,” as Alice Clark Cook fretted; while listening, your mind lapsed into “a complete and comfortable vacuum.”

Others worried it would kill off amateur musicianship. If we could listen to the greatest artists with the flick of a switch, why would anyone bother to learn an instrument themselves? “Once the talking machine is in a home, the child won’t practice,”

We know for a fact that isn't true now - Amateur musicianship has flourished as a human artistic endeavour. Turns out, human desire for expressing creativity is pretty resilient!

The phonograph was still pretty inconvenient because in order to hear the sound, you needed to manually turn the crank. The sound was encoded ("cut") into cylinders via a cutting stylus. When the cylinder was rotated (manually), it would vibrate a diaphragm according to the cut and send those vibrations back into the air.

Once the genie was out of the bottle though, there was no stopping it. The world embraced the idea and collectively refined it, and with each iteration the ease of use and quality kept getting better. It was improved by Alexander Graham Bell with the graphophone (which removed the need for a manual crank). The cylinders gave way to vinyl records in 1890s, then came the Electrical Era (with signal amplification), then came the Magnetic Era (VHS tapes) and then finally in the 1970s, we began to enter the Digital Era (with CDs). And that's where we still are today, albeit with UX improvements (like streaming).

How do mobile phones today do it?

Today, sound recording and storage is completely ubiquitous with the mobile phone. I previously wrote about how the human ear receives and transmits audio signal to the brain. Many of the principles of the outer ear remain the same even for modern mics.

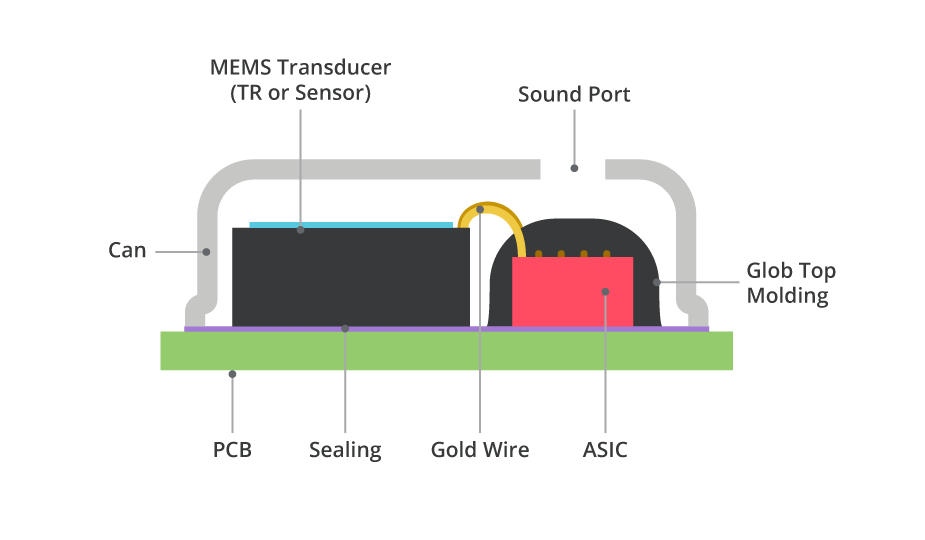

In mobile phones, you'll typically find an MEMS (micro electro-mechanical system) microphone which acts as the "ear" of the device. Similar to the ear drum, the mic has a diaphragm - a thin membrane which picks up incoming sound wave vibrations.

What's different though is that there's a backplate a small distance behind the diaphragm. This backplate is permanently charged. This combination (diaphragm + backplate) acts like a capacitor (i.e. two charged plates separated by an insulator) and creates an electric field inside it. When sound enters the mic, it causes the diaphragm to move in proportion to the amplitude of the incoming wave. This varies the distance between the diaphragm and the backplate which also varies the capacitance. The change is capacitance is then converted to an electrical signal. This signal is usually pretty weak, so there's typically an ASIC (application specific integrated circuit) to amplify, and then convert it to a digital signal.

Analog to Digital Conversion

To actually make the sound usable and interpretable by the phone, we need to convert the analog sound wave into a digital representation.

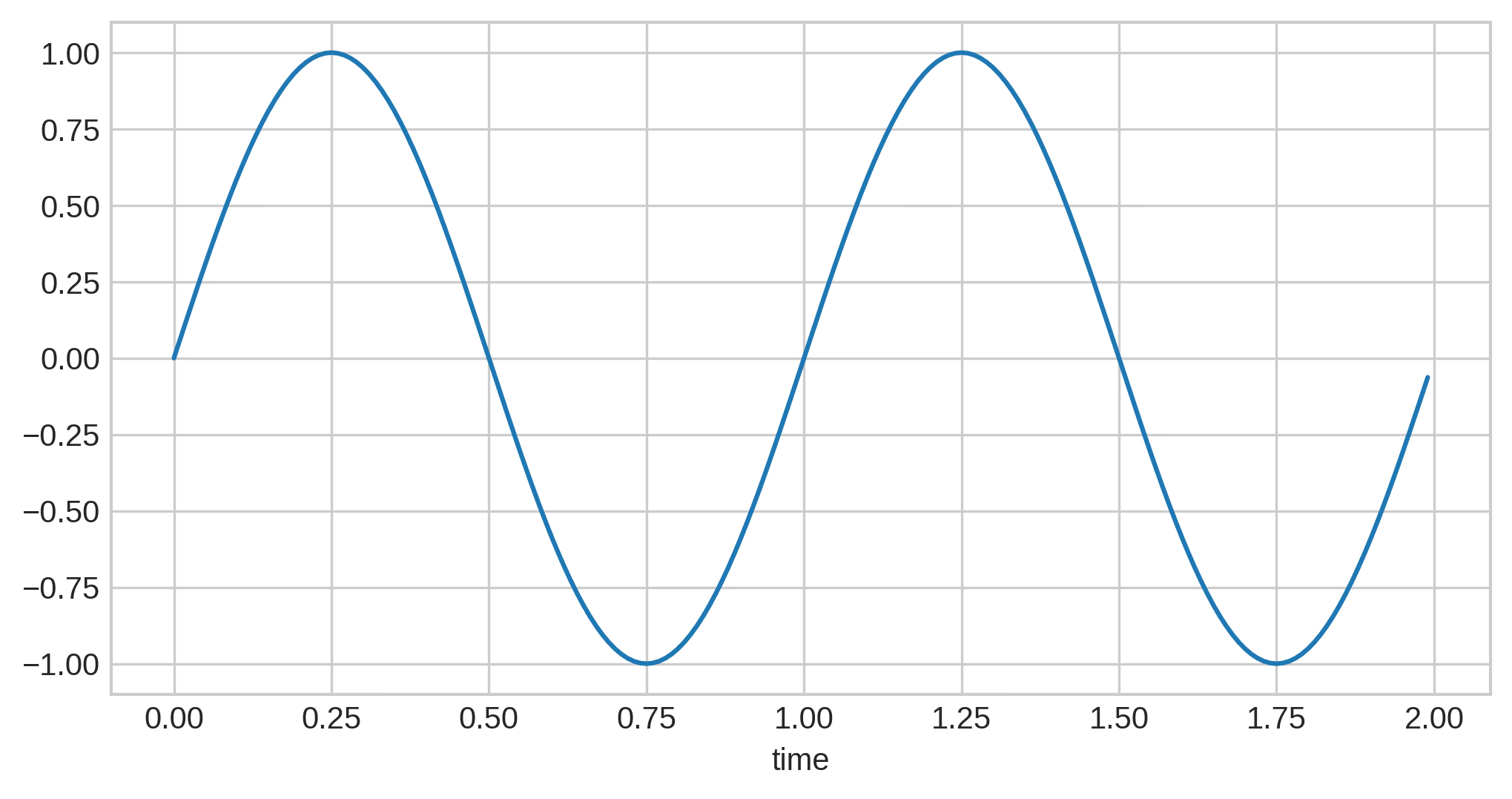

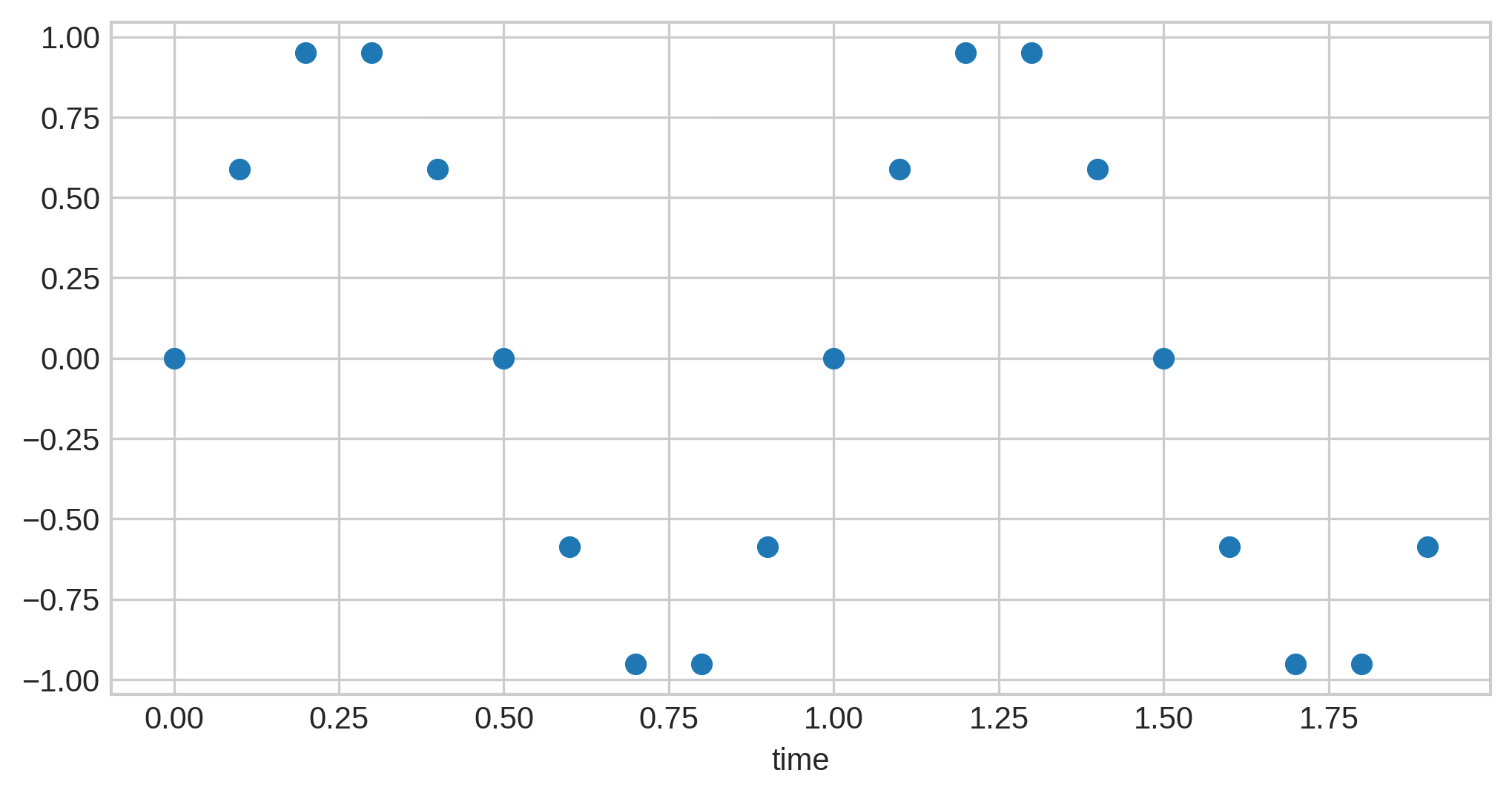

This involves "sampling" the audio signal at regular intervals - i.e. we take a snapshot of what the wave looks like at a particular point of time. At a high enough sampling frequency, the digital version becomes a "good enough" approximation of the analog wave to the extent that the difference wouldn't be apparent to the human ear.

(An audio waveform, and its sampled version. From Audio Storage, Refi64)

(An audio waveform, and its sampled version. From Audio Storage, Refi64)

At each sample, we capture a representation (the amplitude) of the signal at that point. This could be done with varying amounts of precision. The bit depth (i.e. the number of bits recorded in each sample) represents how precisely we depict each sample.

So overall, the information content in the digital representation can be measured by the bit-rate. Mathematically:

bit_rate = bit_depth * sample_rate * num_audio_channels

As a general rule of thumb (with some caveats), higher bitrate -> more accurate representation of the source audio signal.

Bitrate numbers you should know today:

- Spotify Premium - 320 kbit/s (Source)

- CD Audio - 1,411 kbit/s

- MP3 - 320 kbit/s

- AAC Bluetooth Codec - 320 kbit/s

- Vinyl - 128 kbit/s (this is analog so this isn't a fair comparison, but this is an indirect estimation based on the dynamic range of vinyl)

Interestingly, CD audio is the undisputed winner in terms of audio quality. That means we've technically retrogressed in audio quality in recent years, with the tradeoff being a superior UX of streaming and wireless headphones. I doubt anyone beyond the most devout audiophiles could tell the difference in audio quality. I don't think I could. ¯\(ツ)/¯

Other References: